|

// Deep Dive AI Will Replace Jobs. Cutting Headcount First Is the Mistake.A historical case for upskilling instead of laying off — and the strategic trap that's about to catch a generation of CEOs. |

|

📝 A note from Mark You probably noticed the newsletter looks different. The changes are mostly cosmetic. We've always felt our editions were content-rich but a bit visually flat — hard to scan on a phone between meetings, longer than they had to feel. So we brought in Claude Design to help raise the production value to match what we think is already strong content. The poll at the bottom of this email takes ten seconds. The honest signal will shape what we do next. — Mark |

Klarna spent 2024 telling the market its AI agent had replaced roughly 700 customer service reps and was driving headcount from 4,500 toward 3,500. By 2025, the company was hiring humans back. That story is going to repeat across the next two years with different logos — and the executives who avoid the trap will look like geniuses while the ones who don't will look like the people who fired their entire web team in 2001 and tried to hire it back in 2003. Yes, AI will replace jobs. The strategic question is what you do with the workforce you already have.

Stop firing your future workforce. Train them. The companies that look unbeatable in 2028 won't be the ones that cut deepest in 2026. They'll be the ones whose CEOs said, in the middle of the noise, "we are not laying off — we are retraining." |

|

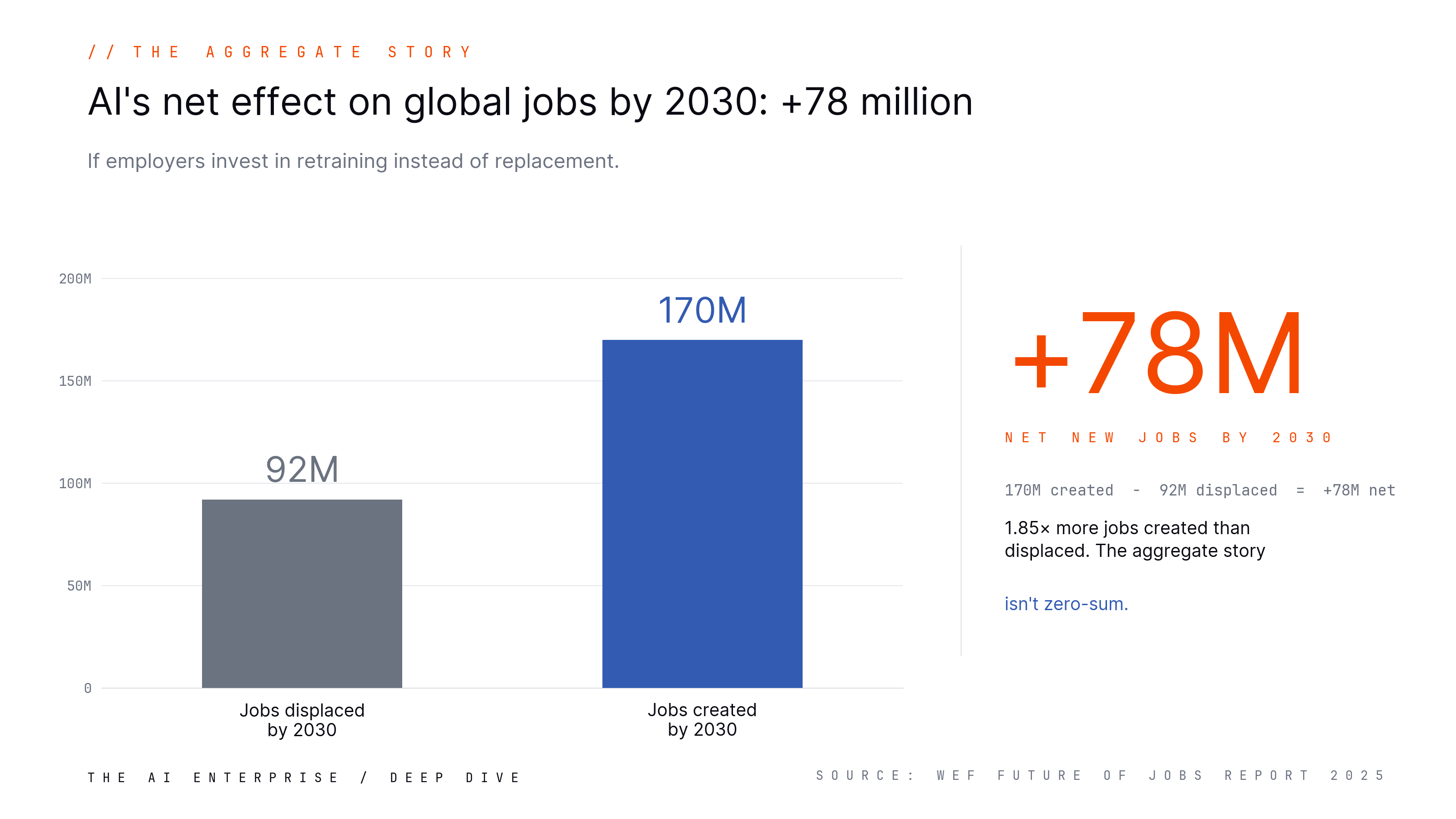

In 2024, Klarna's CEO Sebastian Siemiatkowski went on a media tour with what was, at the time, the cleanest version of the AI-replaces-jobs story in business. The company had built a customer service assistant powered by OpenAI. It was, he said, doing the work of roughly 700 human agents. Headcount was projected to fall from around 4,500 to 3,500. The market loved it. The story got reprinted in every business publication that covers tech. I watched that story land, and I watched it reverse. By 2025, Siemiatkowski was telling the press that Klarna had pushed too hard on AI substitution, that customer experience had degraded in ways the dashboards didn't catch fast enough, and that the company was hiring humans back. The AI didn't get turned off — it stayed in service — but the headcount strategy got rewritten in public. The Klarna story is going to get told a hundred more times over the next two years, with different company logos at the top of the press release. I'm writing this now because it points at a strategic mistake that's about to become very common, and the executives who avoid it are going to look like geniuses while the ones who don't are going to look like the people who fired their entire web team in 2001 and tried to hire it back in 2003. To be clear: I'm not arguing nobody loses a job. Some people will, and some roles genuinely won't have a path forward. But that should be the exception, not the rule. A workforce strategy where layoffs are the headline and upskilling is the footnote has the proportions exactly backwards. Deep Dive: AI Will Replace Jobs. Cutting Headcount First Is the Mistake.A historical case for upskilling instead of laying off — and the strategic trap that's about to catch a generation of CEOs. Yes, AI will replace jobs. I'm not going to argue against that. Goldman Sachs estimated in 2023 that as many as 300 million jobs globally are exposed to substitution from generative AI. The World Economic Forum's Future of Jobs Report 2025 projects something like 92 million jobs displaced by 2030 against 170 million created — a net gain of 78 million. The Anthropic Economic Index keeps publishing data on which occupations are seeing the deepest AI augmentation, and the trend lines are real. |

|

The harder part of the prediction — the part the displacement headlines miss — is this: every previous wave of technological labor disruption created more demand for human work than it destroyed. The workers who captured that new demand were the ones whose employers invested in retraining them. The companies that responded by aggressively cutting headcount were the ones that got caught short when the demand reappeared on the other side. The companies that responded by upskilling won the next decade. What Is the Substitution TrapThe substitution trap is the gap between what AI can technically do and what an enterprise can durably operate. It compounds at three levels: Individual level. An AI agent can produce work that looks like the output of an experienced human in the role. It cannot, yet, recognize the edge cases where its own output is wrong in ways that compound. The individual it replaces was holding two jobs — producing the output AND catching the cases where the output broke. The substitution captures one half of that job and silently drops the other. Team level. AI agents work best inside workflows designed by humans who already know the failure modes. A team where the senior humans have been cut loses the people who could have designed the supervision around the agent. The remaining team is junior relative to the system they're now responsible for operating. Organizational level. The institutional knowledge of "how this customer behaves," "where the data is dirty," "what regulatory tripwires this product brushes against" is concentrated in the workforce that's being substituted. Cut the workforce, and the next product decision is made without the context it would have had three years ago. The damage shows up two or three quarters later in metrics that don't directly tie back to the layoff. This is the part the headcount math doesn't capture. Technology destroys tasks faster than it destroys jobs — and the jobs that survive a clean substitution are usually two jobs in a trench coat. How It Works in Business ContextsThe history is consistent across three previous waves. Stage 1: Agriculture to industry. In 1800, roughly 75% of the US workforce was employed in agriculture; by 1900 that figure had fallen to around 41%. By 2000 it was under 2%. The agricultural labor share fell by an order of magnitude over two centuries, and the displaced labor did not vanish into a permanent unemployed underclass. It migrated, painfully and unevenly, into manufacturing, then services, then knowledge work. None of the new categories of work — telephone operator, automotive assembly, computer programmer, financial analyst — were predicted at the start of the transition. Stage 2: ATMs and bank tellers. This one is the most useful for AI conversations because it's so counterintuitive. James Bessen at Boston University documented that the introduction of ATMs through the 1970s and 1980s did not eliminate the bank teller job. The per-branch teller count fell from 20 to 13 between 1988 and 2004 — but banks responded by opening more branches to compete for market share, and the total number of tellers continued to rise into the 2000s. The work changed too: cash handling became less important, relationship work more important. The technology made the job different, not gone. Stage 3: Spreadsheets and bookkeepers. When VisiCalc shipped in October 1979 and Lotus 1-2-3 went mainstream in January 1983, business journalism predicted the end of the bookkeeper. Bookkeeping employment did contract. What grew, dramatically, was the financial analyst role — a job that existed at the margins before spreadsheets and absorbed an enormous amount of new work after them. The accountants who learned spreadsheets became the analysts. The ones who didn't became surplus.

The pattern is consistent: technology destroys tasks faster than it destroys jobs; surplus capacity gets reabsorbed into higher-value work; and the people who got hurt the most were the ones whose employers did the least to help them move. How to Implement the Upskilling PlayThe most leveraged investment a CEO can make in 2026 is the conversion of the existing workforce into AI-fluent operators. Three phases. Phase 1: Designate the time. Four to six hours a week, paid, structured, on company time. Treated as a real investment, not a perk. Practical steps:

Phase 2: Build the curriculum around real work. Not training exercises — actual workflows the worker is learning to operate with AI in the loop. The first agent you ship should make somebody's actual job 20% easier. Practical steps:

Phase 3: Wire it to promotion. If the upskilled workers don't get promoted into the new roles you need filled, the program is a charity, not a strategy. Practical steps:

Key Success Factors

Common MisstepsFour named mistakes already showing up in production decisions: 1. Cutting first, training second. The Klarna pattern. Run the headcount math on the AI substitution, take the savings, miss the customer-experience degradation for a quarter or two, then try to rehire. The rehires don't have the institutional knowledge of the people who were cut, the market knows you just did layoffs, and you've burned the upskilling story you needed for the team that remained. 2. Outsourcing AI fluency to universities and bootcamps. A four-year degree cycle cannot keep up with a tool stack that re-versions every quarter. Bootcamps trail the curve by six to twelve months. Cohort programs graduate too slowly to fill an enterprise pipeline. Waiting for the talent market to produce AI-fluent practitioners in volume is waiting for something that won't show up in time. 3. Treating upskilling as a perk, not a budget line. Programs that live in "lunch and learn" slots and rely on employees' personal time die in the second quarter. The companies that get this right put the program on the comp plan, the calendar, and the promotion pipeline — not on the wellness Slack channel. 4. Building the program without a promotion pathway. If the upskilled workers don't get the new roles, they leave for competitors who will pay them for the fluency you just trained. You built the workforce; somebody else gets to use it. Business ValueROI Considerations

Competitive Implications A trusted workforce plus AI fluency is a combination that does not exist on the open market at any price. You cannot buy that workforce. You have to build it. The companies that build it have a moat competitors cannot close in any reasonable timeframe — because those competitors are also bidding for the same scarce external pool, paying the premium, and accepting the multi-quarter productivity ramp. The CEOs who hold the line on upskilling through one or two uncomfortable quarterly reviews end up with the workforce no one else can buy. |

|

// Key Takeaways

|

|

// What This Means for Your Planning If you're sitting on a 2026 workforce decision this quarter, the question isn't "where can AI cut headcount?" It's "where can AI multiply the headcount we already have?" The aggregate productivity story the AI vendors are telling is real, and it's about to be wrong in the same way the dot-com productivity story was wrong in 1999 — directionally right, locally catastrophic for the firms that bet against their own people. The cohort that gets hit hardest is the one closest to the substitution, and the institutional damage shows up in metrics that don't tie back to the layoff for two or three quarters. By the time it surfaces, the workforce that could have been retrained is at a competitor. The planning question for the next budget cycle is simple. Do you have a line item for AI fluency training in the company you already run? Not a vendor demo budget. Not a consulting engagement. A line item — owned, dated, measured, with promotions tied to it — for the work of turning the workforce you trust into the workforce you need. Amara's Law is the one I keep coming back to: we tend to overestimate the impact of a technology in the short term and underestimate it in the long term. Right now, the short-term overestimation is showing up as headcount cuts driven by demos. The long-term underestimation is showing up as a failure to imagine how much new human work the AI buildout will create — and how badly we will need experienced operators who can supervise the systems we're building. So here's the question to bring to your next planning meeting: What's your retraining budget for the next four quarters, and who owns the promotion pathway it feeds into? |

How do you like the new AIE newsletter design?

|